Google has published a new family of large language models Gemma 4, based on the technologies of the model Gemini 3. Gemma 4 is distributed under the Apache license in versions with 2.3, 4.5, 25.2 and 30.7 billion parameters (E2B, E4B, 31B and 26B A4B). The E2B and E4B variants are suitable for use on mobile devices, Internet of Things (IoT) systems and boards such as Raspberry Pi, while the remaining variants are suitable for use on workstations and systems with consumer GPUs. The size of the context taken into account by the model is 128 thousand tokens for models E2B and E4B, and 256 thousand tokens for models 31B and 26B A4B.

The models are multilingual and multimodal: 35 languages are supported out of the box (more than 140 languages were used during training), and text and images can be processed at the input (models E2B and E4B additionally support audio processing). Model 26B A4B is based on the MoE (Mixture-of-Experts) architecture, in which the model is not divided into a series of expert networks (only 3.8 billion parameters can be used when generating a response, but the speed is significantly higher than classical large models), and the remaining options use a classic monolithic architecture.

Models support reasoning and customizable deliberation modes, support System Role for processing instructions (rules, restrictions) separately from data. Models can be used for coding, object recognition in images, frame-by-frame video analysis, document and PDF parsing, optical character recognition (OCR), speech recognition, and translation between languages. Can be used as autonomous agents that interact with various tools and APIs.

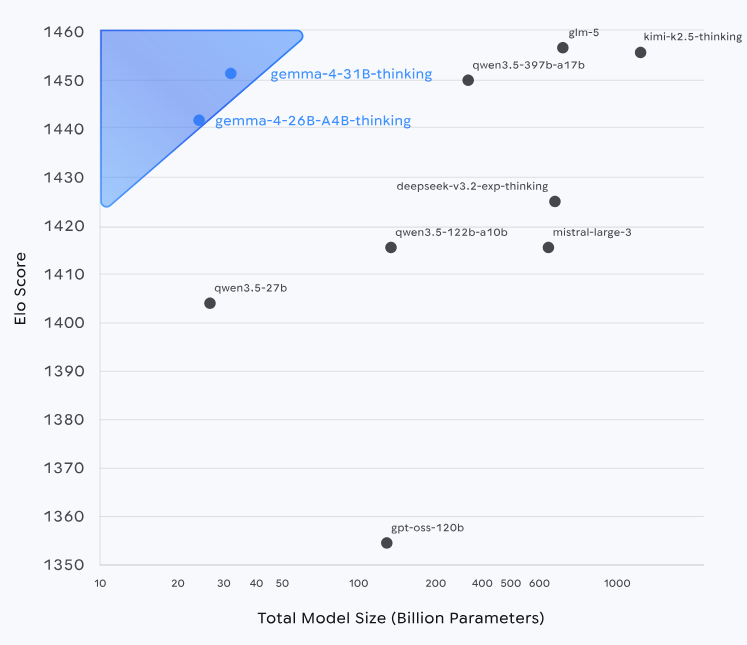

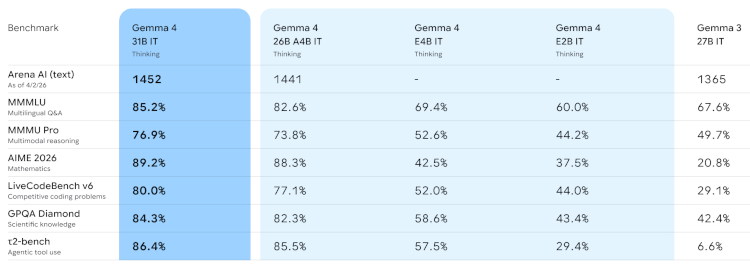

In most tests the Gemma 4 series models significantly outperformed the Gemma 3 model with 27 billion parameters. Gemma 4 is supported for use with tools and libraries LiteRT-LM, vLLM, llama.cpp, MLX, Ollama, NVIDIA NIM and NeMo, LM Studio, Unsloth, SGLang, Cactus, Basetan, MaxText, Tunix and Keras.