In a recent development for the Linux kernel, a set of patches has been proposed for the implementation of a distributed replicated block device called DRBD 9. This technology allows the creation of a RAID-1 array using network-mirrored drives connected to different systems. The aim is to test the driver in the linux-next branch and prepare it for integration into the Linux 7.2 kernel.

The previous version of DRBD has been included in the kernel since version 2.6.33, which was released 16 years ago. However, the code in the kernel is based on the older DRBD 8 branch and does not align with the newer DRBD 9 branch, released in 2015. This led to the development of the DRBD 9 branch as a separate external module, not synchronized with the main kernel module. The patches proposed aim to bridge this gap and update the implementation in the Linux kernel.

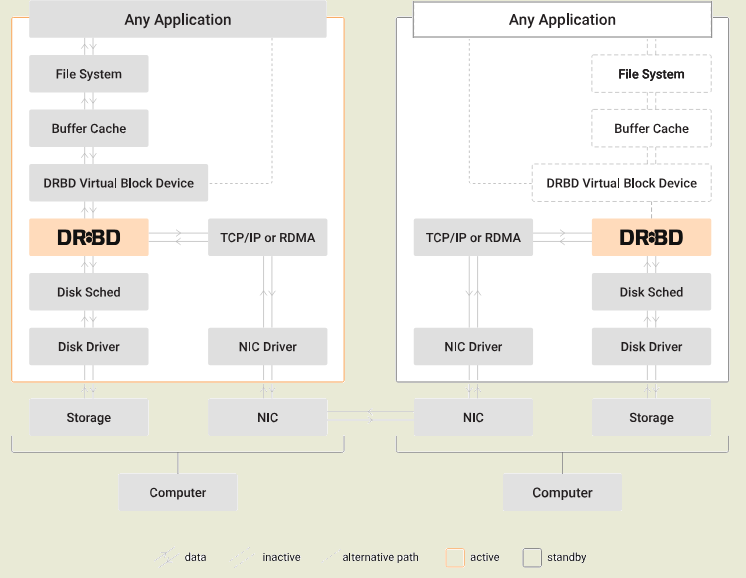

DRBD enables the combination of cluster nodes’ drives into a fault-tolerant storage system. From the perspective of applications and the system, this storage appears as a block device that is consistent across all systems. With DRBD, local disk operations are replicated to other nodes and synchronized with their disks. In case of node failure, the storage continues to function using the remaining nodes until the failed node is restored and synchronized automatically.

This clustered storage solution can accommodate up to 32 nodes across local networks and geographically dispersed data centers. Synchronization in such setups occurs through a mesh network, with data spreading from node to node along the chain. Replication can be synchronous or asynchronous, with options for additional compression and encryption for remote nodes. DRBD 9’s abstraction of the transport layer allows for communication over RDMA/Infiniband in addition to TCP/IP, doubling replication performance while reducing CPU load by 50%.

Furthermore, DRBD 9 has increased the maximum synchronized storage size to 32 nodes and introduced improvements in node resynchronization logic, lock setting mechanisms, network namespace support, automatic node status adjustment based on activity, two-phase commits, and non-blocking update distribution.